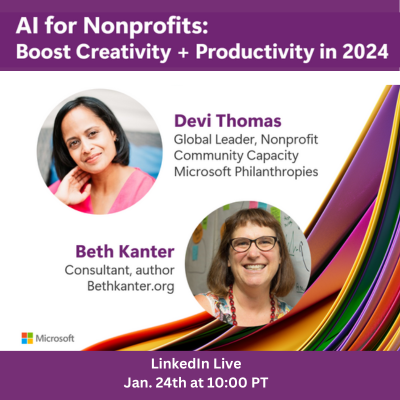

I was thrilled to have a conversation with Devi Thomas from Microsoft Philanthropies. She shared a few highlights from Microsoft’s recent nonprofit sector research. We also discussed use cases, benefits, limitations, adoptions, and lots of practical tips. You can watch the video here as well as the human curated resources shared and a Copilot created summary transcript here. If you want to continue learning about AI for nonprofits, register for the Global Nonprofit Leaders Summit, using the code VIRTUAL. Below is my fully human-generated reflection.

Highlights from Microsoft’s Nonprofit Sector Research on AI

Devi kicked off by sharing a sentiment analysis from a recent survey of nonprofit leaders that fall into one of two different perceptions: comfort and concern. On the concern side;

- 60% of the nonprofit leaders say they don’t trust the decisions that AI is making instead of them making them.

- 63% are worried about the security risks posed by AI

- 58% are worried about this really steep learning curve that their entire team has to go through to understand how to use generative AI

The last point highlights a perceived challenge that, in practice, becomes less daunting once users engage with tools like Copilot. The worry about a steep learning curve is more of a mindset change, from letting go of doing the work from zero to 100% and shifting to focusing on the 80-100%.

On the comfort side:

- 63% of the nonprofit leaders say that AI is trustworthy in their workplace

- 64% believe that it will enhance their creativity

- 25% of nonprofit leaders believe that the most important skill they can teach their teams and learn is the skill of when to use AI, and when to use the human skills

In digging deeper into how nonprofits are using generative AI like Copilot in the workplace, 69% use it to edit their work. 65% are using it to clean-up their Monday morning inboxes. (I have personally found this use case extremely helpful to have a one-click summary of really long threads transformed into actionable steps w/ links to source email.) These examples of micro productivity examples can applied easily to working.

Use Cases

We discussed different use cases beyond productivity for writing tasks and processing email. Devi shared some inspiring examples of how the technology can serve missions and stakeholders, including disaster response organizations leveraging AI to predict and respond to crises more efficiently, and educational nonprofits using AI for tailored learning experiences. Another example includes using AI to optimize volunteer engagement by aligning opportunities with individual skills and interests. She pointed out there was a wide range of use cases for staff as well as volunteers. In our book, The Smart Nonprofit, we present many examples of nonprofit use cases as well as Microsoft’s many nonprofit examples.

Devi’s advice: understand the best use case for your organization and how you and your staff work best with the AI. Create small low risk experiments to answer the question: does this use case make sense for our organization? Then move onto learning and improving, but organizations should to start small. I would add that it is also okay not to feel pressured to go fast, with AI is good to move slowly.

Devi also emphasized the concept of “copilot” where the human is the pilot and always in charge. One metaphor is to think of the AI as an intern that you need to clearly communicate instructions to and then check their work and revise.

Responsible Use

In terms of responsible and ethical AI, especially projects where AI is interacting with external stakeholders, Devi walked through Microsoft’s Six Principles which include accountability, inclusiveness, reliability & safety, fairness, transparency, privacy and security of personal data contextualizing it in a nonprofit environment. She also used the term “Red Teaming” where you test the technology to try to break it so you feel confident that it will do no harm.

When it comes to using generative AI tools such as Copilot for drafts of written materials, a human must always check it for accuracy because it can make mistakes. You need to ask for citations, read the source material, triangulate statements with other sources, look for exaggerations, or misleading statements, etc. In other words, use all your critical thinking skills. Researchers have a term for when someone skips this important step of auditing the output, “asleep at the wheel.” (For more advice on getting started responsibly, see 8 Steps Nonprofits Can Take for Responsible AI Adoption and advice on drafting an acceptable use policy.)

Adoption Strategy

Devi mentioned that small nonprofits have been more motivated to get in front of generative AI tools because it shifts them out of time scarcity to time abundance. No matter the organization’s size, it’s critical to have leadership at the executive director level deeply invested. Devi emphasized that the important step in adoption is shifting to a growth mindset. And to create buy-in with staff and volunteers, an area where nonprofit adoption of Copilot tools is growing.

I curated a number of excellent links to adoption of AI materials to help guide nonprofit strategy, including:

- AI Compass for Nonprofits | by Microsoft and AI4SP.org

- Co-Pilot Adoption – Adoption Manager

- Microsoft Future of Work Report 2023

- Rapid Adoption KIT – Prompt Basics

To prepare for the Q&A, I used Copilot and asked what questions might come up and develop personas based on the event description and attendees. And, the very questions asked by an audience member was exactly what Copilot predicted!

The Q&A was robust, but I was struck by a question about how to pivot a nonprofit’s culture of learning, especially if you have staff who are not technology savvy. Devi emphasized that generative AI tools like copilot are new to everyone. And because they use natural language processing, it makes it easier to use because you can have a conversation versus navigating menus. Also, she noted, “It’s unbelievable to have a tool like this that allows everyone to be a techie and create our own personal copilots to do some of the boring, repetitive work or help us learn new things.”

Reflecting on Devi’s comment, I do believe that AI is a learning opportunity for everyone, but it is important to create psychological safety where people can ask questions and make it okay to experiment. I think we need to use a beginner’s mind and be open to learning.

Our conversation underscored AI’s transformative potential for the nonprofit sector, alongside the importance of navigating its ethical implications. Embracing a culture of learning, ensuring leadership engagement, and approaching AI as a tool to enhance human creativity and strategy are pivotal. AI presents an opportunity to redefine nonprofit impact, making our efforts more effective and far-reaching.

Where is your nonprofit in adoption AI? How are you addressing concerns? What use cases have you explored? How are you experimenting and learning?

Leave a Reply