Here is a recent roundup of articles, blog posts, and research on the “Age of Automation” and what it means for nonprofits.

Special Report: AI for Good: Earlier this month, Allison Fine and I wrote an op-ed for the Chronicle of Philanthropy on the age of automation and it implications for fundraisers. It is part of a special report on AI for Good. While it is behind the paywall, we looked at some early adopters and how they use ChatBots for fundraising, identified specific challenges, and lay out steps to get started. Allison Fine and I have been actively researching and writing about the Age of Automation. Other recent articles include: Leveraging the Power of Bots for Civil Society on SSIR Blog and The Robots Have Arrived: How Nonprofits Can Put Them To Work on Guidestar Blog.

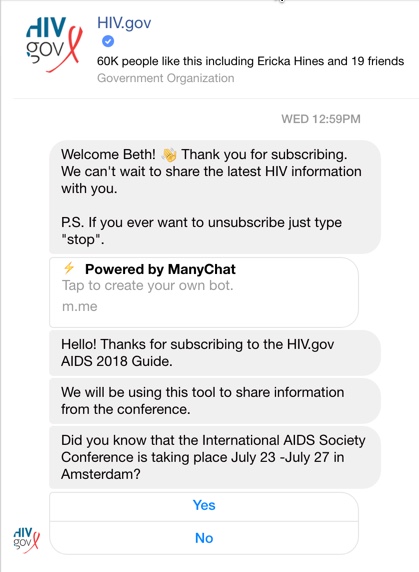

HIV.Gov launches ChatBot: HIV.gov recently launched a pilot chatbot on Facebook Messenger The bot is designed to share information from AIDS Society Conference taking place July 23 -July 27 in Amsterdam. The agency used bot authoring platform ManyChat for this pilot. HIV.gov has written about the use of ChatBots in healthcare on its blog that offers best practices uses for healthcare, identifies current research, and suggestions for getting started.

AI at Work Study: Future Workplace, partnered with Oracle to interview 1,320 U.S. HR leaders and employees to better understand how companies are adopting AI in the workplace. The study examined HR leader and employee perceptions of the benefits of AI, the obstacles preventing AI adoption and the business consequences of not embracing AI. The study found that 71 percent believe AI skills and knowledge will be important in the next three years, 72 percent of HR leaders noted that their organization does not provide any form of AI training program.

AI and Implicit Bias: CNN/Money reports that AI is hurting people of color and the poor. Researchers say that artificial intelligence, which could fundamentally change the world while contributing to greater racial bias and exclusion.”Every time humanity goes through a new wave of innovation and technological transformation, there are people who are hurt and there are issues as large as geopolitical conflict,” said Fei Fei Li, the director of the Stanford Artificial Intelligence Lab. “AI is no exception.” Experts attending the AI Summit – Designing a Future for All, said new industry standards, a code of conduct, greater diversity among the engineers and computer scientists developing AI, and even regulation would go a long way toward minimizing these biases.

AI Can Be Sexist and Racist — It’s Time to Make It Fair: In this article in the Journal of Nature, the authors argue that we must identify sources of bias, de-bias training data and develop artificial-intelligence algorithms that are robust to skews in the data. The articles shows some examples and how biases in the data often reflect deep and hidden imbalances in institutional infrastructures and social power relations. The authors suggest what is needed to change this.

AI4ALL – AI4ALL is a nonprofit working to increase diversity and inclusion in artificial intelligence. They create pipelines for underrepresented talent through education and mentorship programs around the U.S. and Canada that give high school students early exposure to AI for social good. Their vision is for AI to be developed by a broad group of thinkers and doers advancing AI for humanity’s benefit.

Human-Centered AI – Term coined by AI researcher Fei Fei Li, the director of the Stanford Artificial Intelligence Lab, that says that the development of AI technology must be guided, at each step, by concern for its effect on humans. More in this Op-Ed in the NY Times, “How To Make AI That’s Good for People.”

AI As Productivity Booster: This HBR article discusses why and how IA (Intelligent Assistants) can reduce techno stress and boost productivity, but unfortunately most people are not using them.

Beth Kanter is a consultant, author, influencer. virtual trainer & nonprofit innovator in digital transformation & workplace wellbeing.

Leave a Reply